Databricks Unity Catalog External Tables

Databricks Unity Catalog External Tables - Leading data and ai solutions for enterprises

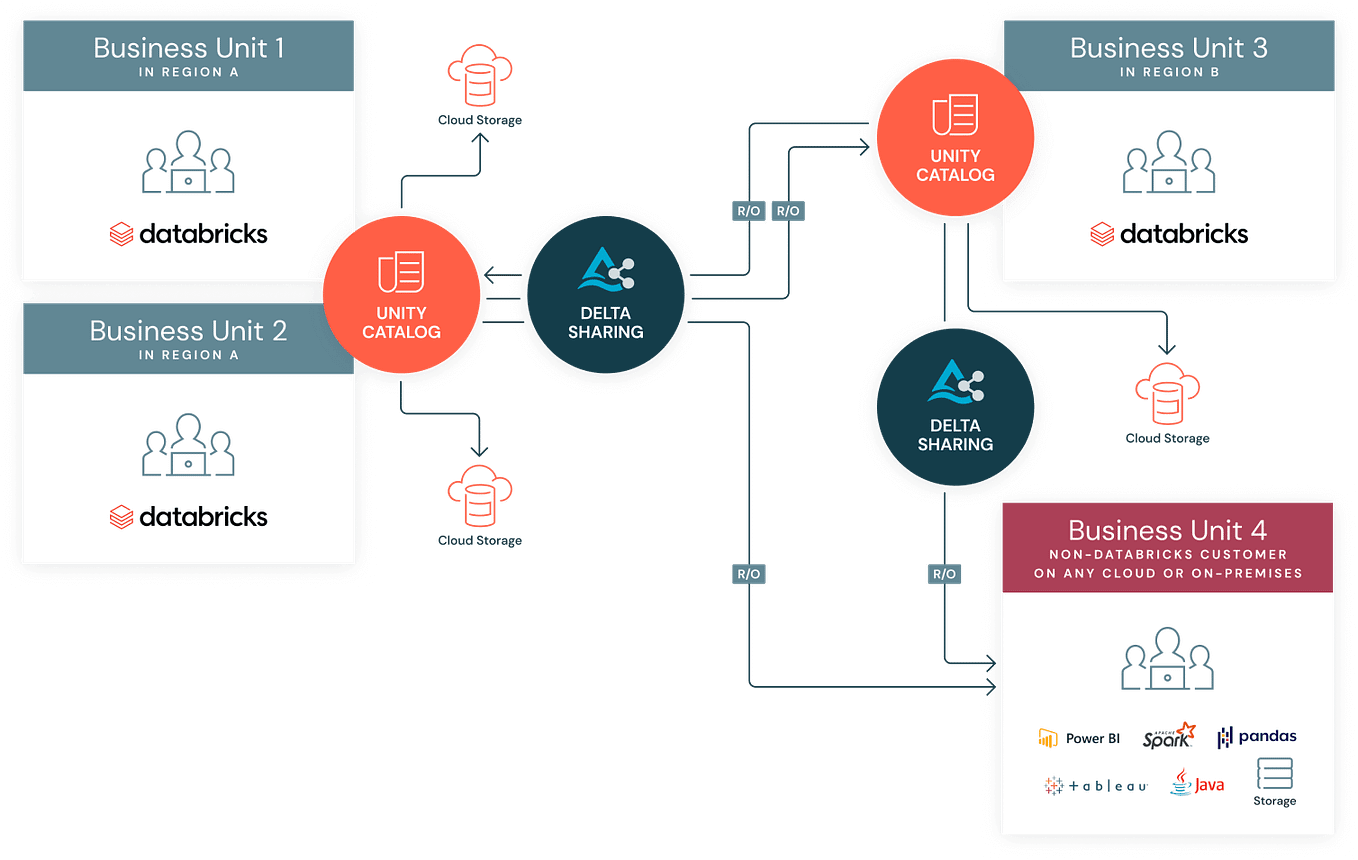

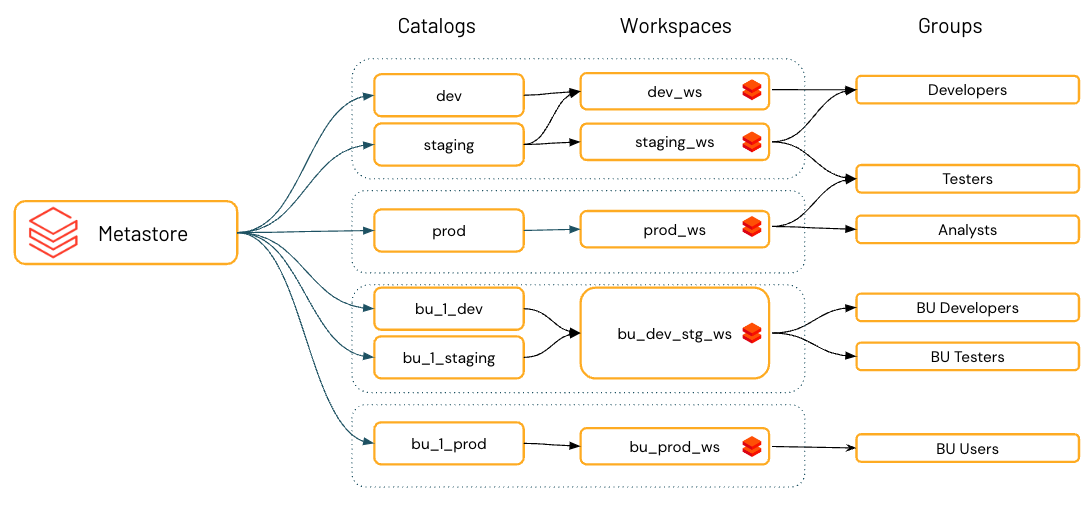

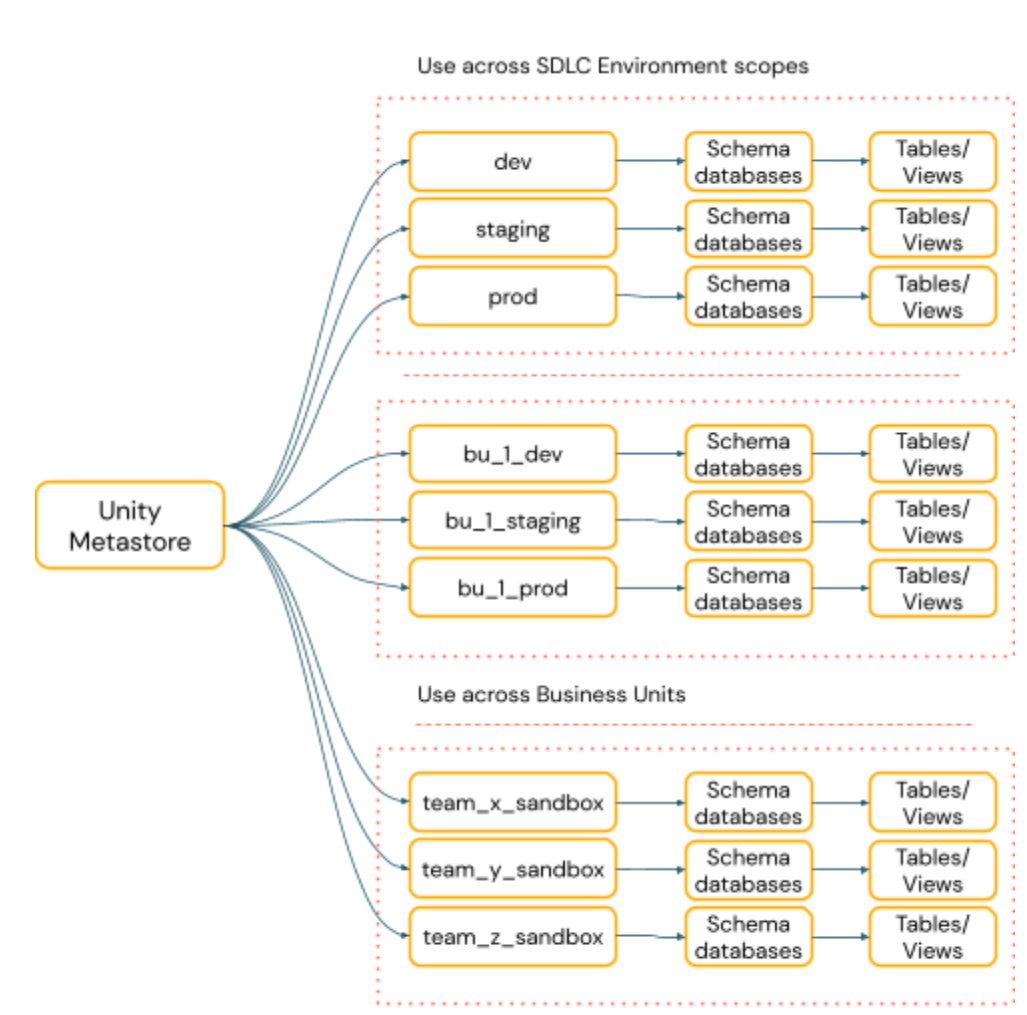

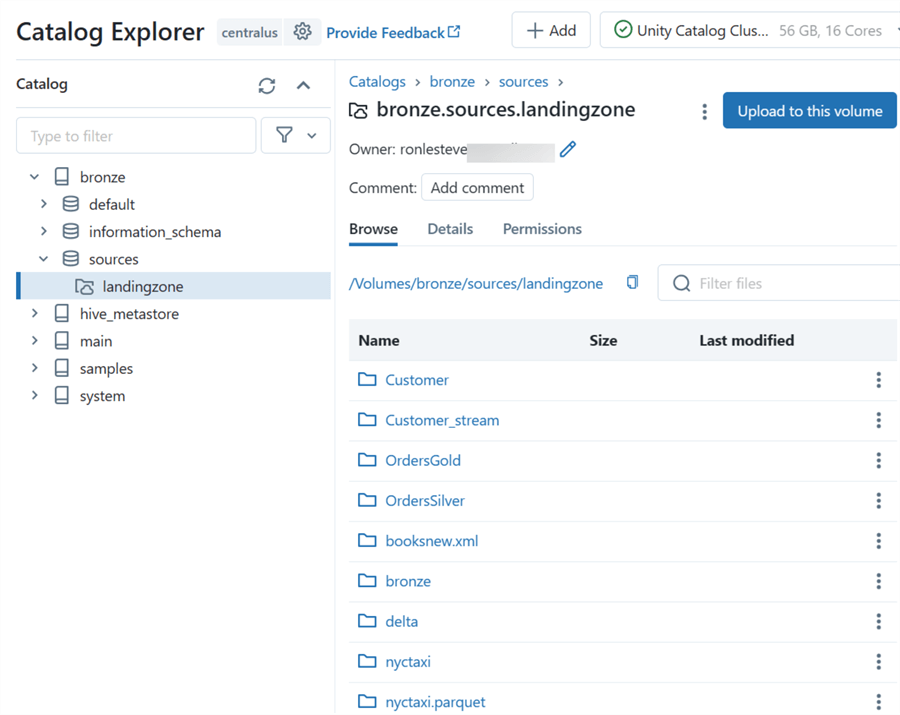

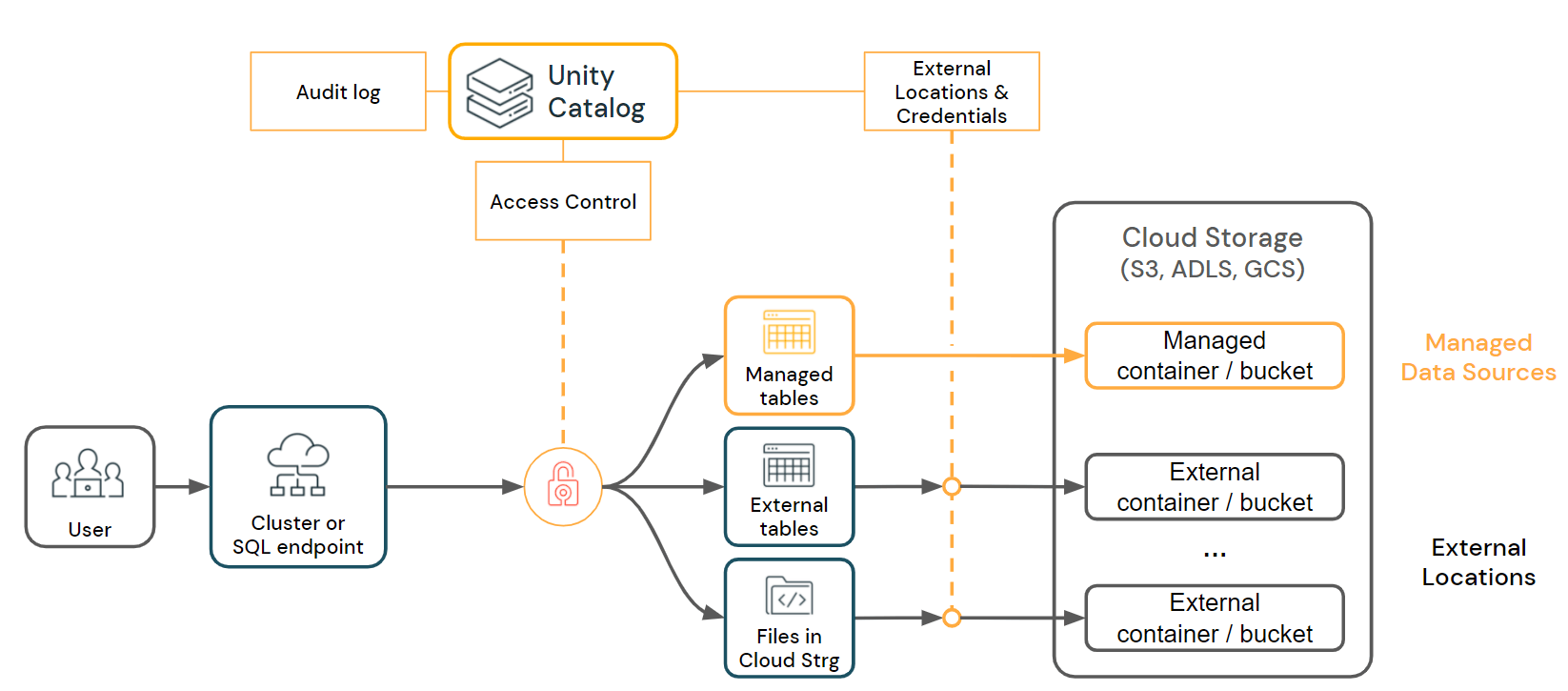

databricks offers a unified platform for data, analytics and ai. Databricks provides access to unity catalog tables using the unity rest api and iceberg rest catalog. Learn how to work with foreign catalogs that mirror an external database, using databricks lakehouse federation. The examples in this tutorial use a unity catalog volume to store sample data. Learn how to ingest data, write queries, produce visualizations and dashboards, and configure. External tables in databricks are similar to external tables in sql server. Sharing the unity catalog across azure databricks environments. This article describes how to use the add data ui to create a managed table from data in azure data lake storage using a unity catalog external location. We can use them to reference a file or folder that contains files with similar schemas. Unity catalog (uc) is the foundation for all governance and management of data objects in databricks data intelligence platform. To use the examples in this tutorial, your workspace must have unity catalog enabled. Governs data access permissions for external data for all queries that go through unity catalog but does not manage data lifecycle, optimizations, storage. Since its launch several years ago unity catalog has. Azure databricks provides access to unity catalog tables using the unity rest api and iceberg rest catalog. Databricks offers a unified platform for data, analytics and ai. This article describes how to use the add data ui to create a managed table from data in amazon s3 using a unity catalog external location. You will serve as a trusted advisor to external clients, enabling them to modernize their data ecosystems using databricks data cloud platform. Databricks recommends configuring dlt pipelines with unity catalog. The examples in this tutorial use a unity catalog volume to store sample data. The examples in this tutorial use a unity catalog volume to store sample data. Sharing the unity catalog across azure databricks environments. The examples in this tutorial use a unity catalog volume to store sample data. External tables support many formats other than delta lake, including parquet, orc, csv, and json. This article describes how to use the add data ui to create a managed table from data in amazon s3 using a unity. A metastore admin must enable external data access for each. You will serve as a trusted advisor to external clients, enabling them to modernize their data ecosystems using databricks data cloud platform. Dlt now integrates fully with unity catalog, bringing unified governance, enhanced security, and streamlined data pipelines to the databricks lakehouse. To.people also search for databricks external tablesdatabricks external. This article describes how to use the add data ui to create a managed table from data in amazon s3 using a unity catalog external location. This article describes how to use the add data ui to create a managed table from data in amazon s3 using a unity catalog external location. To.people also search for databricks external tablesdatabricks external. External tables support many formats other than delta lake, including parquet, orc, csv, and json. Simplify etl, data warehousing, governance and ai on the data intelligence platform. This article describes how to use the add data ui to create a managed table from data in azure data lake storage using a unity catalog external location. Databricks provides access to unity. To create a monitor, see create a monitor using the databricks ui. This article describes how to use the add data ui to create a managed table from data in azure data lake storage using a unity catalog external location. An external location is an object. Learn how to work with foreign catalogs that mirror an external database, using databricks. Load and transform data using apache spark dataframes

to use the examples in this tutorial, your workspace must have unity catalog enabled. You will serve as a trusted advisor to external clients, enabling them to modernize their data ecosystems using databricks data cloud platform. Pipelines configured with unity catalog publish all defined materialized views and streaming tables to. Dlt now. This article describes how to use the add data ui to create a managed table from data in amazon s3 using a unity catalog external location. This article describes how to use the add data ui to create a managed table from data in azure data lake storage using a unity catalog external location. Databricks recommends configuring dlt pipelines with. Databricks recommends configuring dlt pipelines with unity catalog. Since its launch several years ago unity catalog has. The examples in this tutorial use a unity catalog volume to store sample data. External tables support many formats other than delta lake, including parquet, orc, csv, and json. Databricks offers a unified platform for data, analytics and ai. To.people also search for databricks external tablesdatabricks external table pathexternal table azure databricksdatabricks create foreign catalogazure unity catalog external tableunity catalog managed tablesunity catalog delete external tableshive databricks external tablesrelated searches for databricks unity catalog external tablesdatabricks external tablesdatabricks external table pathexternal table azure databricksdatabricks create foreign catalogazure unity catalog external tableunity catalog managed tablesunity catalog delete external tableshive databricks. To create a monitor, see create a monitor using the databricks ui. Simplify etl, data warehousing, governance and ai on the data intelligence platform. For managed tables, unity catalog fully manages the lifecycle and file layout. This article describes how to use the add data ui to create a managed table from data in amazon s3 using a unity catalog. External tables support many formats other than delta lake, including parquet, orc, csv, and json. To use the examples in this tutorial, your workspace must have unity catalog enabled. Databricks recommends configuring dlt pipelines with unity catalog. This article describes how to use the add data ui to create a managed table from data in azure data lake storage using a unity catalog external location. Data lineage in unity catalog. Databricks provides access to unity catalog tables using the unity rest api and iceberg rest catalog. I am not sure if i am missing something, but i just created external table using external location and i can still access both data through the table and directly access files. Unity catalog governs data access permissions for external data for all queries that go through unity catalog but does not manage data lifecycle, optimizations, storage. An external location is an object. Dlt now integrates fully with unity catalog, bringing unified governance, enhanced security, and streamlined data pipelines to the databricks lakehouse. Sharing the unity catalog across azure databricks environments. Learn how to ingest data, write queries, produce visualizations and dashboards, and configure. We can use them to reference a file or folder that contains files with similar schemas. Learn how to ingest data, write queries, produce visualizations and dashboards, and configure.missing: Load and transform data using apache spark dataframes

to use the examples in this tutorial, your workspace must have unity catalog enabled. You will serve as a trusted advisor to external clients, enabling them to modernize their data ecosystems using databricks data cloud platform.Query external Iceberg tables created and managed by Databricks with

13 Managed & External Tables in Unity Catalog vs Legacy Hive Metastore

Unity Catalog best practices Databricks on AWS

Unity Catalog Setup A Guide to Implementing in Databricks

正式提供:Unity CatalogからMicrosoft Power BIサービスへの公開 Databricks Qiita

An Ultimate Guide to Databricks Unity Catalog — Advancing Analytics

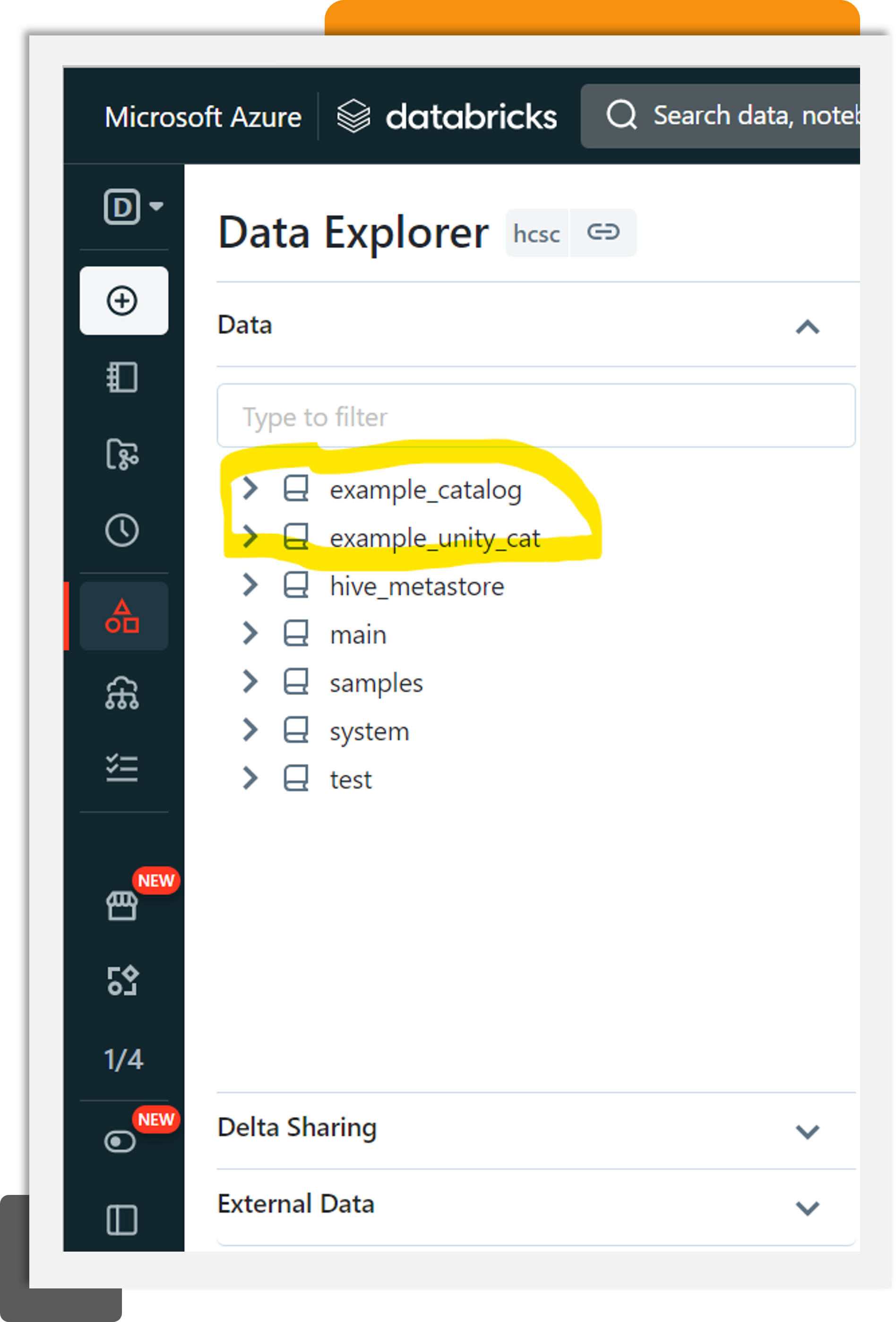

Unity Catalog Enabled Databricks Workspace by Santosh Joshi Data

Databricks Unity Catalog and Volumes StepbyStep Guide

A Comprehensive Guide Optimizing Azure Databricks Operations with

Introducing Unity Catalog A Unified Governance Solution for Lakehouse

A Metastore Admin Must Enable External Data Access For Each.

Governs Data Access Permissions For External Data For All Queries That Go Through Unity Catalog But Does Not Manage Data Lifecycle, Optimizations, Storage.

Access Control In Unity Catalog.

The Examples In This Tutorial Use A Unity Catalog Volume To Store Sample Data.

Related Post: